The Moral Character of Scientific Work

The first paper we covered was Phil Rogaway’s The Moral Character of Cryptographic Work. The paper centers around the moral and ethical responsibilities of cryptographers but its themes are relevant and applicable to computer science as a whole. This was a useful paper for us to discuss early in the course so that we could cover important notions for the rest of the course.

Summary

The paper is divided into four parts:

- Social responsibility of scientists & engineers: is a broad discussion about the ethical responsibilities of scientists;

- Political character of cryptographic work: discusses the inherent political nature of cryptography and the apolitical culture of part of the academic cryptography community;

- The dystopian world of pervasive surveillance: describes two opposing framings of mass surveillance;

- Creating a more just and useful field: describes a set of problems that Rogaway believes cryptographers should work on.

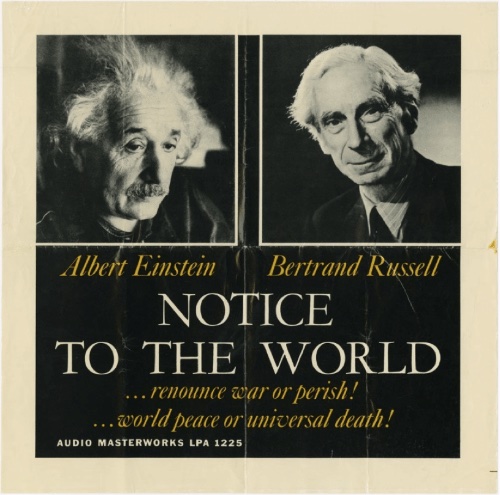

Social responsibility of scientists & engineers. In this section, Rogaway discusses the post World War II scientific “ethic of responsibility”. He describes the Russell-Einstein manifesto from 1955 which called for nuclear disarmament and the Pugwash Conference on Science and World Affairs which played an important role in disarmament and diplomacy. He then argues that technical work itself can be political and that, in response, scientists can engage in politics in one of two ways. The first is via implicit politics, where a scientist affects politics as a byproduct of their technical work. The second is via overt politics where a scientist affects politics through activism.

Rogaway then describes three historical events that shaped scientists’ ethics of responsibility: the experience of physicists after the invention of nuclear weapons; the Nuremberg trials which established the idea that following orders does not absolve one from moral responsibility; and the rise of the environmental movement. According to Rogaway, these events lead to a sort of “golden era” of scientific responsibility where ethics and social concerns were the norm in scientific work.

Yet, he points out, in recent decades the ethics of responsibility in science has eroded considerably. He describes several instances of scientists and other academics that choose to explicitly take amoral and apolitical stands. This is summarized in the following quote from Stanley Fisch:

…don’t confuse your academic obligations with the obligation to save the world; that’s not your job as an academic.

Rogaway speculates as to what caused this decline and believes that it is, in part, due to the rise of technological optimism. Technological optimism is the idea that technology is inherently positive and that technological innovation will solve most of humanity’s problems. Rogaway’s argument is, roughly speaking, that technological optimists equate technology with progress and this eliminates the need to think critically about one’s work. In other words, since the act of creating technology is inherently good why should we, as technology builders, even worry about the consequences of our work? A contrary perspective is that of technological pessimists who believe that technology does not lead to progress and point to weapons of mass destruction and the environmental crisis as one of many examples of technology’s negative impact. Somewhere in the middle are technological contextualists who acknowledge technology’s negative impacts but believe it can be directed and harnessed towards positive outcomes.

Rogaway ends this section with a reminder of scientist’s ethical responsibilities. The first is that one’s role is not simply to “do no harm” but to actively pursue social good. The second is that the responsibilities of a scientist extend beyond herself all the way to her community, i.e., as a scientist you are morally responsible for the impact of your scientific community.

The political character of cryptographic work. Here, Rogaway argues that cryptography is inherently political but that its political nature has been erased in part of the academic cryptography community. He recalls that, in the early days of modern cryptography (late 70’s and early 80’s), cryptography research was often motivated by political questions. He then describes how academic cryptography eventually fragmented into two communities represented, roughly speaking, by the IACR community (CRYPTO, Eurocrypt, Asiacrypt and TCC) and the PETS community. Rogaway illustrates his point using two papers that were published around the same time: Chaum’s Untraceable electronic mail, return addresses, and digital pseudonyms [Chaum81], which is highly influential at PETS; and Goldwasser and Micali’s Probabilistic Encryption [GM82], which is highly influential in IACR conferences. Rogaway then states

Papers citing [GM82] frame problems scientifically. Authors claim to solve important technical questions. The tone is assertive, with hints of technological optimism. In marked contrast, papers citing [Chaum81] frame problems socio-politically. Authors speak about some social problem or need. The tone is reserved and explicitly contextualist views are routine.

He then discusses another cryptographic community: the cypherpunks whose principles can be roughly articulated as the pursuit of individual privacy against governments and corporations through the use of open and freely available cryptography. The section ends with a survey of various cryptographic primitives that Rogaway argues embed political and sometimes even authoritarian principles.

The dystopian world of pervasive surveillance. In this section, Rogaway presents two different framings of mass surveillance: one from law enforcement which frames privacy as a personal good and (national) security as a collective good; and one from surveillance studies which frames both privacy and security as collective goods. The Law Enforcement argument is that the widespread deployment of encryption is increasing personal privacy at the cost of national security. Law enforcement agencies are “going dark” due to encryption and their ability to protect the nation is rapidly deteriorating. The privacy and surveillance studies argument, on the other hand, is that Law Enforcement has access to an unprecedented amount of information about people and that that is certainly enough for it to accomplish its mission. Trying to stop the deployment of encryption and other cryptographic technologies not only weakens people’s individual privacy but weakens the social goods that privacy enables like dissent and social progress.

Creating a more just and useful field. In the final section, Rogaway describes technical problems cryptographers should work on if they want to help build a better field. This discussion is very crypto-centric so we won’t summarize it here but only mention that he advocates for cryptographers to expand the problems they work on to include surveillance-motivated research. Rogaway also discusses funding, claiming that most of the funding for cryptography research (in the U.S.) comes from the Department of Defense (DoD) and advocates that academics “think twice, and then again, about accepting military funding”.

Our Discussion

We agreed with many of Rogaway’s points. In particular, with the pervasiveness of technological optimism in our field and decline of ethical responsibility in science.

Ethical responsibility. Most of us agreed that scientists should be bound by the ethics of responsibility that Rogaway summarizes. One of us, however, did not agree and believed that scientists and engineers should focus on creating technology and not on technology’s social implications, which should be left to others. Though this was a minority view, we also understand that Brown is perhaps not completely representative of the entire political spectrum and that this perspective is surely more common in the wider computer science community.

Another issue we discussed was that the scientists and communities that shaped the post-war ethic of responsibility were overwhelmingly white and male. Many of us felt that this was important because people’s values and and moral code are shaped by their background and experiences. And without diverse perspectives, the range and scope of possible ethical codes that were considered at the time were likely very narrow.

The decline of ethical responsibility. We discussed why ethical responsibility in science declined. Rogaway’s hypothesis is that it is, in part, linked to an increase in individualism and in technological optimism. There is, however, another perhaps more cynical hypothesis: maybe scientists have never been that ethically engaged in the first place. The historical events that lead to the post-war golden era of responsibility were atypical. The use of nuclear weapons and the concentration camps of Nazi Germany were two of the worst atrocities committed in human history. So it is not surprising that scientists had to reconcile with these events and respond in a way that changed their view of moral responsibility.

But is this really the standard to which scientists and engineers should be held today? It should not take genocide and the creation of new weapons of mass destruction to galvanize our community to develop and adhere to ethical standards. These atrocities were highly visible and hard to ignore. But the everyday minutiae of working on morally gray problems is easier to overlook. And if we don’t provide everyday scientists and engineers the tools and frameworks to evaluate the ethics and social impact of their own work, we will continue to leave morality aside in the pursuit of academic and entrepreneurial success.

With this in mind, is it then surprising that the ethos of responsibility did not survive? It was created as a reaction to extreme events but it did not fundamentally change how science is conducted or how students in STEM are educated.

A new ethics of responsibility. We also talked about whether today’s ethic of responsibility should still be founded on the post-war ethics? Should it ask for more? If so what? Many of our students were born in the 2000’s and grew up in a world that is markedly different from Einstein and Russel’s. This also related to our discussion about the lack of diversity in post-war science. So the question was: given the current state of the world and a (slightly?) more diverse scientific community, what should today’s ethic of responsibility look like?

Fragmentation of academic cryptography. Those of us that worked in cryptography agreed with Rogaway’s description of our field and of the apolitical culture of the IACR community. Nobody felt this was particularly controversial; it is obvious to anyone who reads the proceedings and attends the conferences.

Since this was obvious, our conversation focused more on why this might be the case. One of Rogaway’s observations is that the IACR community is technologically optimist whereas the PETS community is technologically contextualist. Another reason, however, might be because the cryptography community lacks diversity; both demographic diversity and intellectual diversity. In fact, we talked about who exactly becomes a cryptographer? What experiences do they bring to the table? How are they trained? Many (if not most) people in this community come from a math and theoretical computer science background. Most have been educated and trained at one of a handful of institutions. Most of the community’s academic lineage can even be traced back to a handful of people.

We agreed with Rogaway’s call for the community to expand the scope of problems it works on. But we also wanted the community to be more intentional about diversifying itself. A scientist’ s choice of problems is shaped by who they are. Including people of different backgrounds would expand the scope of research and provide wider perspectives. IACR sponsors non-US conferences like Eurocrypt, Asiacrypt and Africacrypt but anyone familiar with these venues knows that they are not designed to promote a local view of cryptographic research; i.e., the same problems studied at CRYPTO are studied at Asiacrypt and at Africacrypt. Can we really have a conversation about the moral character of cryptographic work without expanding who has access to which platforms or addressing how we gate-keep as a community?

Funding. For context, our discussions occurred against the backdrop of the Jeffrey Epstein scandal and Ronan Farrow’s story about the MIT Media Lab’ s financial relationship with Epstein. So we spent a lot of time discussing funding. Wile Rogaway’s funding critique centered around individual academics’ choice of taking DoD money, ours centered around the institutions in which these academics worked, i.e., Universities. Asking individual faculty to be circumspect about their funding is great but if the institutions they work for: (1) do not fund their research; but (2) evaluate and promote them based on their research; and (3) pressure them to obtain funding so that they can pay their summer salaries and fund their PhD students; then these institutions need to shoulder some of the responsibility. To be clear, none of us felt that apportioning blame to Universities absolved faculty from their decisions and certainly not Joi Ito and the Media Lab. But we did feel like any conversation about funding needs to include a broader discussion about how academic research is funded and the incentives that creates for individual faculty.

Editorial note on algorithmic fairness. Looking back on this discussion, the issues surrounding the fragmentation of the cryptography community seem particularly relevant to what is happening in the field of algorithmic fairness. The first conference on the subject, FaccT, advocates for interdisciplinary work that is rooted in and informed by the social context surrounding fairness, accountability and transparency. From the FaccT webpage:

Research challenges are not limited to technological solutions regarding potential bias, but include the question of whether decisions should be outsourced to data- and code-driven computing systems. We particularly seek to evaluate technical solutions with respect to existing problems, reflecting upon their benefits and risks; to address pivotal questions about economic incentive structures, perverse implications, distribution of power, and redistribution of welfare; and to ground research on fairness, accountability, and transparency in existing legal requirements.

On the other hand, the more recent Foundations of Responsible Computing (FORC) emphasises the mathematical, algorithmic, statistical and economics aspects of responsible computing. From the FORC website:

The Symposium on Foundations of Responsible Computing (FORC) is a forum for mathematical research in computation and society writ large. The Symposium aims to catalyze the formation of a community supportive of the application of theoretical computer science, statistics, economics and other relevant analytical fields to problems of pressing and anticipated societal concern.

Just as cryptography is inherently political, algorithmic fairness is inherently social and political so it will be interesting to see how this community chooses to evolve.